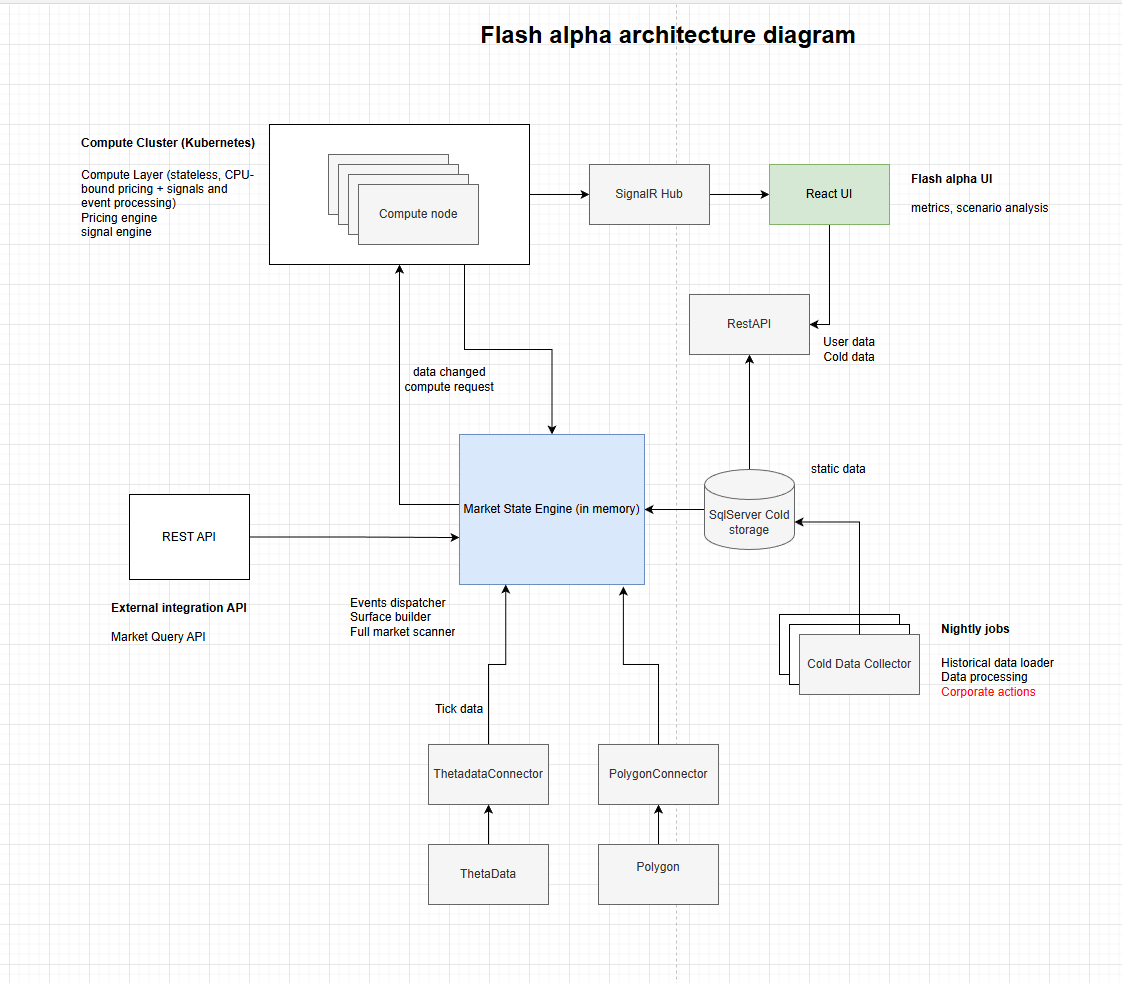

FlashAlpha System Architecture

A detailed breakdown of our low-latency compute cluster, from OPRA ingestion to SignalR delivery.

1. Ingestion Layer (ThetaData / Polygon)

FlashAlpha ingests raw market data from multiple providers to ensure redundancy. We utilize ThetaData and Polygon for real-time tick and consolidated quote feeds.

- ThetaDataConnector & PolygonConnector: Specialized services that handle raw socket connections, managing heartbeat logic and reconnection strategies.

- Tick Data Normalization: Incoming packets are normalized into a standard internal format before being passed to the engine.

2. The Market State Engine (In-Memory)

At the core of the platform is the Market State Engine. This is a monolithic, high-performance in-memory state manager.

- Zero-Copy Processing: designed to minimize GC pressure by using struct-based layouts and unmanaged memory where necessary.

- State Management: Maintains the "live" state of the entire option market (over 1.8M instruments potentially).

- Events Dispatcher: Routes changes (price updates, volume spikes) to the appropriate sub-systems (Surface Builder, Scanner).

3. Compute Cluster (Kubernetes)

Heavy computational tasks are offloaded to a scalable Kubernetes cluster.

- Stateless Compute Nodes: These nodes handle CPU-bound signals, pricing models (Black-Scholes, Binomial), and event processing.

- Horizontal Scaling: As market activity increases (e.g., market open), K8s spawns additional compute pods to handle the load.

4. Data Storage (Hot & Cold)

We employ a tiered storage strategy:

- In-Memory (Hot): The Market State Engine holds live data for immediate query and display.

- SQL Server (Cold): All trade data, aggregated metrics, and historical snapshots are persisted to SQL Server for post-day analysis and cold retrieval.

- Cold Data Contractors: Nightly jobs that run historical data loading and handle Corporate Actions processing to keep reference data pristine.

5. User Interface & API

Delivery to the client must be as fast as the backend.

- SignalR Hub: Pushes real-time updates to connected clients using WebSockets. This avoids polling and reduces latency for the end-user.

- React UI: A modern, responsive frontend that renders complex volatility surfaces and metrics grids efficiently.

- REST API: Provides programmatic access to user data and "cold" historical data.

Ready to trade on this infrastructure?

Stop building it yourself. Start finding alpha.